Trading bad inefficiency for good inefficiency at the Technology Leadership Forum

Six idiosyncratic observations after a day with enterprise tech leaders

The Chief Infrastructure Technology Executives’ Roundtable (CITER) met in October 2009 at Oceana’s old location on East 53rd Street. Twelve heads of infrastructure met over dinner to discuss operating models. It was cathartic for them. The folks from banking and those from pharma debated who has the most intrusive regulators. At the end one participant told me: “My wife doesn’t understand it. My boss doesn’t understand it… My dog doesn’t understand it. I come to this dinner and I’m around the table with a bunch of other people who do understand what I’m wrestling with.”

CITER evolved and expanded over the years. After COVID, it re-emerged as the Cloud Leadership Forum (CLF), which was bigger and more ambitious. A couple of years ago CLF became the Technology Leadership Forum (TLF) as cloud became less of a distinct issue and more an organic part of the way you run enterprise technology.

TLF makes for two of my favorite days of the year. An opportunity to spend time with 50 of the most thoughtful enterprise technology executives I can find and discuss issues like AI platforms, AI security, product operating models, technology innovation and semantic layers/knowledge graphs. As you would expect, in recent sessions everyone there has wrestled with how AI can and should change enterprise technology.

Here are a few of my idiosyncratic takeaways from the day:

You can use AI to trade bad inefficiency for good inefficiency.

Mythos is cause for determination, not panic.

Transforming a business domain with AI requires hard problems and a number.

AI will disrupt, not destroy B2B software.

Token economics might not mirror ride-sharing economics.

Tech economics must focus on incentives, not precision.

1. You can use AI to trade bad inefficiency for good inefficiency

For decades, institutions removed craftsmanship, personalization and tactile experiences because the coordination costs were too high. AI may allow us to recapture warmth and texture — not despite efficiency gains, but because of them.

For each TLF session, we assemble a directory with the name, photo, role and contact information for every participant and faculty member. Historically, this was painful. Participant changes, updated photos and corrections to biographies required us to send a request to the visual aids team and wait 24 hours for them to update the document manually with design software.

A few years ago, we saved time by eliminating the printed version altogether. We just emailed participants a PDF. Operationally, it was more efficient. Even if people missed the physical book.

Agent Serena transformed our tradeoffs. She stored TLF information in a graph of Markdown files and allowed us to turn the event program into a composable document, assembled via a skill. We needed to update a photo or add a new participant? Just two instructions: one to update the information and a second to regenerate the PDF.

We used the time we recovered from pre-press production to print old-fashioned spiral-bound books containing the program, faculty information and breakout materials. They looked great. Participants loved them.

Where else can we use AI to fund the return of things lost to the imperative of rationalization?

2. Mythos is cause for determination, not panic

At the second CLF session in 2021 we held a cloud security breakout and nobody cared. It drew fewer participants than any other breakout. This year my colleagues Sven Blumberg and Rich Isenberg led a plenary session on what advancing frontier models mean for technology risk, and I had to drag them off the stage. The crowd would have kept discussing this issue for another couple of hours if I had let them.

Clearly the threat is real, and not only about zero days. Advanced models will provide attackers with unprecedented visibility into corporate technology environments — security by obscurity may not be a good strategy now, and it will be even less tenable shortly. For years the attackers with the most advanced capabilities didn’t have the destructive intent. And the attackers with the most destructive intent lacked access to the most destructive capabilities. New models can perform more complicated processes and allow a much broader range of attackers to employ new vectors.

US-based frontier labs may succeed in creating guardrails that limit malicious use of their products. None of those will apply to locally hosted, open weight models with capabilities that trail offerings from Anthropic, OpenAI and Google by months rather than years.

And TLF members are concerned. None of them believed that their company’s cybersecurity program was keeping pace with AI-enabled cybersecurity threats. Their boards were asking questions about how fast to move and where to start that they didn’t entirely know how to answer. And they had doubts about many of their software vendors. Patch velocity had increased, but not patch quality. They fear many vendor patches will create new vulnerabilities as they try to remediate older ones. One member said: “I don’t think vendors understand what they’re building.”

And yet I heard determination rather than panic or resignation. Some of this may be years of lived experience. Nearly fifteen years ago the US Secretary of Defense warned of a cyber Pearl Harbor. It hasn’t happened. Each year brings new challenges, but enterprise technology as a domain manages to stave off the cataclysm. At least for another few months.

TLF members indicated that they would push for transparency into their environments. They could isolate systems they couldn’t patch on dedicated network segments. They would automate their patching processes. And over time they might use AI-enabled software engineering to remediate technical debt — and build better software in place of the fragile environments they have now. All of which will require determination, funding and the ability to think in systems. One member said: “The leadership team thinks about innovation over here and risk over there — we haven’t figured out how to discuss this in an integrated way and make tradeoffs.”

3. Transforming a business domain with AI requires hard problems and a number

Almost no TLF members said their companies had deployed AI at scale. Most didn’t have any agents in production. Two sessions explored how to build organizational conviction behind and momentum in using AI to reshape business domains.

The session on building agentic workers wrestled with the shape and priorities for a transformation. Everyone agreed that you need a T-shaped transformation, one in which you pursue visible change early in some areas to create enthusiasm in the executive team — and at the same time build out the underlying technology platform to facilitate scaling and contain the technical debt you create.

Which led to a more contentious debate: if you create agentic workers, which workers should you start with? Yes, you need supportive business leaders, but you also need to pick hard problems. If you focus on easy wins in defining your digital workers, you give ammunition to those who describe AI as a toy rather than a tool.

My colleagues Brian Elliot and Mark Gu led a session based on their experiences deploying an AI operating system to drive business change. You need a platform that goes far beyond agent management to data pipelines, knowledge orchestration (often via a graph) and connection to traditional machine learning modules.

Starting with two or three related, difficult business problems allows you to justify the platform investment — but also implies organizational disruption. You will redesign roles for human employees, require new forms of collaboration and upend long-standing assumptions. Legal will resist using LLMs to process any employee data. Security teams may not yet be comfortable with agentic identities. HR is paying high salaries for AI experts who may not manage large teams. These fights are happening now.

You overcome resistance with a number: customer retention, operating margin, inventory turns — some metric with a direct enough relationship to share price that senior executives will say to those who push back on change, “I hear what you are saying, but I want my thing. So figure out a way to make it work.”

4. AI will disrupt, not destroy B2B software

We had a new type of plenary session at TLF last week — a panel of venture capitalists. Ed Sim from Boldstart, Daniel Frankenstein from Joule Ventures and Will Summerlin from Autopilot engaged with the group on how enterprises can best work with VC-funded companies and how AI will change B2B software markets.

Wow, there is some frustration out there! What do TLF members see from their incumbent software vendors? Slower innovation, degraded quality and more aggressive negotiation. They think some providers see their products as “falling knives” and have resolved to extract as much cash as they can from the portfolio before it declines into irrelevance. Others simply don’t get AI — they want extortionate rates for unimpressive capabilities that only reinforce silos between different parts of the environment.

Will enterprise software get eaten away from above and below? Will the layer between what companies build themselves and what frontier labs provide disappear as companies use AI-enabled engineering productivity to escape SaaS’s “one size fits none” business model and LLM-providers move up the stack?

History and market structure suggest not. Remember all the predictions about a decade ago that the cloud service providers would eat the entire enterprise software market? That (checks notes) didn’t happen. Enterprise software is an archipelago of hundreds of micro-niches, each with their own needs and idiosyncrasies. Many domains have astronomical switching costs. And companies resist letting one vendor dominate their technology future. AI changes none of that.

In some cases TLF members will make different buy-build decisions, but they have barely begun to uplift their own software engineering capabilities — and they want the specialized content or capability that third-party software can provide. They may want to build more, but they don’t want to build everything themselves.

The VCs on our panel have no illusions about the difficulty in building a disruptive company. They all expressed skepticism about the heady numbers some infant AI companies are reporting, observing that much of it might be trial rather than enduring revenue. Ed also pointed out that some startups are just white-labeling tokens from LLM-providers and would struggle if token economics became more challenging.

Still, they all believe that they face a generational opportunity to build companies that will use AI to displace incumbent software companies that are not meeting the moment. Ed talked about that here in his blog. I don’t think the CIOs and CTOs in TLF disagree with them.

5. Token economics might not mirror ride-sharing economics

Today most TLF members have little idea what they spend on inferencing. Their companies haven’t hit the steep part of the adoption curve yet. Low volumes mean low costs. Could that change? And what should they do if it does?

Infrapartners CTO Harqs Singh sees no slowdown in data center investment. He gets calls asking if they could launch a 100MW project Monday based on a PO sent over the weekend. Harqs turns constructing a 100MW facility into an industrial process, putting capacity in the ground more quickly and at less cost.

But that doesn’t remove other constraints in the system. You still need GPUs or TPUs to put in the data center, and you need electricity to run the chips. These capacity constraints — and the need for investors to generate a return on capital — cause many TLF members to ask whether we are in the early days of ride-sharing.

Several TLF members compared today’s token prices to the early days of ride-sharing. As Uber sought to grab market share, riders paid roughly 41% of the actual cost of each trip; the rest came from investors. Then fares rose 92% between 2018 and 2021. And some jurisdictions have levied additional fees or taxes on each ride.

The ride-sharing analogy isn’t perfect. Ride-sharing promised convenience, rather than massive corporate productivity improvements. American companies and public institutions spend USD 16 trillion in employee compensation. Frontier labs bet that any possible token costs will pale next to efficiencies there. Inferencing costs are probably declining by 90 percent per year. Ride-sharing investors lost a bet that autonomous vehicles would transform their cost structure by now. Ride-sharing also doesn’t have much in terms of attractive adjacencies. Frontier labs believe they can profitably move up the stack into applications and services — some railroads generated more returns dealing in real estate near their stations than from selling tickets.

Even so, you must plan for a world of escalating inferencing costs. As Ed Sim pointed out, “Compute is already fully utilized and Anthropic may ration with a high minimum spend. Token prices are going through the roof — you need to plan for scarcity.”

In response, TLF members expect their companies will develop more insight into inferencing economics so they can focus on accretive use cases, apply FinOps to AI so their applications will use tokens more efficiently and diversify their usage — by running open weight models on neo-cloud infrastructure, for example.

6. Tech economics must focus on incentives, not precision

I have been fascinated for decades by enterprise technology economics. What an interesting and complicated machine! Requests and money go in one side. They interact with policies, existing technology environments, operational processes, organizational capabilities and vendor arrangements. Systems and services come out the other side. How can we better understand the dials you turn to change the relationship between inputs and outputs?

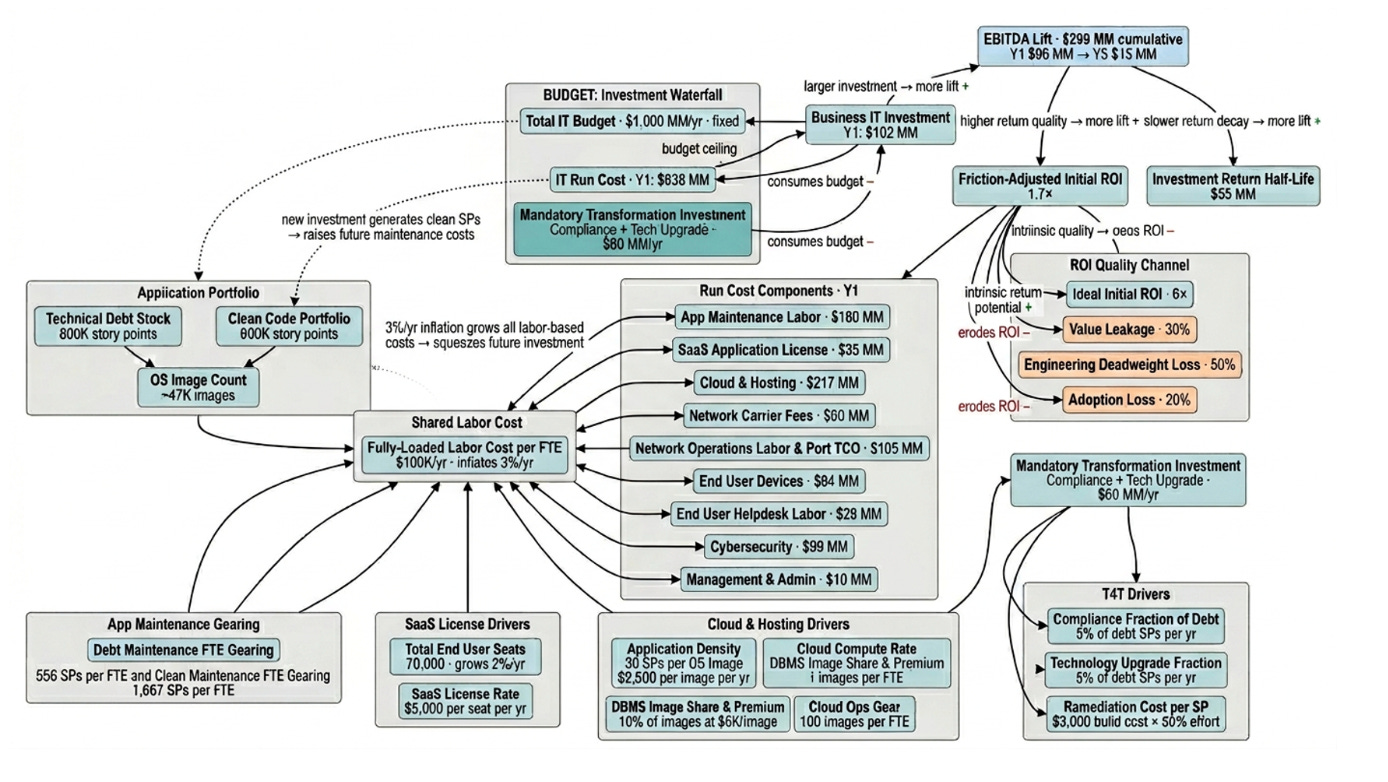

I asked teams to create several generations of spreadsheet models over the years — all eventually collapsed under their own weight. Cursor and Claude Code helped me build the model that describes enterprise technology the way it is — an intricate, dynamic system, with dozens of economic and operational dependencies between nodes in the graph. Here’s what it looks like:

The model demonstrates several important ideas:

Each company faces a pipeline of business-technology investment opportunities that you can stack rank based on ROI — and the ROI declines according to a half-life

Incremental EBITDA lift each year depends on business-driven investment, adjusted for value leakage, engineering deadweight loss and adoption loss. Because these factors are multiplicative, collectively they can consume three-quarters of business investment.

Each million dollars of business-technology investment generates USD 150,000-250,000 in incremental annual run and mandatory investment costs, whether you get business value or not.

Given flat expenditures, companies will tend toward an “IT doom loop,” where run costs and mandatory investments consume the entire budget, driving EBITDA lift to zero.

AI-enabled engineering (or spec-driven development) changes enterprise technology economics. It improves the ROI of retiring technical debt (reducing application maintenance costs) and run automation (reducing infrastructure costs), freeing up more resources to invest in initiatives that improve revenue or reduce operational cost. It also reduces engineering deadweight loss, so you get more EBITDA lift out of each dollar invested. That’s why investments in AI-enabled software engineering will deliver better-than-linear returns for many companies.

This resonated with many participants. They agreed both with the underlying economic dynamics and the need for management teams to understand them better in making investment decisions. The catch? The tendency for accounting to crowd out economic insight. Many bore scars from battles over tech chargebacks, and worried that any calculation of a unit cost would set off endless debates about whether a server image should cost USD 3,100 or USD 3,200.

As always, leadership will be key here. Members of the management team must make clear that they want transparency into technology cost and value to make better decisions, not to push allocations from one line of business to the other.

It was a great session — I look forward to the one in the Fall in NYC!

thanks for having me, what a great community and learned a ton - still so so early for agentic workflows in the enterprise and so much more building to do!

As a student immersed in Leadership & Management, particularly through the lens of Operations Management principles, I have come to recognize a critical truth:

Effective leadership begins with taking responsibility for the system as a whole.

Dr. W. Edwards Deming made a profound observation when he stated that the majority of organizational outcomes are determined by management systems rather than individual contributions. It is often leadership that shapes the operational environment, directly influencing overall performance.

What is especially compelling in this discussion is not just the potential of artificial intelligence, but also the crucial readiness of organizations to harness it successfully.

Today, AI is deeply integrated into every facet of our operations:

- enterprise systems

- healthcare

- education

- infrastructure

- government operations

- and everyday workflows

The capability is no longer a distant possibility; it is here now. The pressing challenge we face is redesigning our systems to capitalize on this technology fully.

From an operations perspective, organizations must urgently begin identifying:

- bottlenecks

- throughput limitations

- process velocity constraints

- governance gaps

- infrastructure readiness

- workflow instabilities

- and failures in cross-functional communication

This is where decisive leadership becomes paramount.

Institutions like McKinsey & Company are uniquely positioned at the crossroads of:

- strategy

- technology

- operations

- organizational transformation

- and executive decision-making

This position not only provides them with significant influence but also instills a responsibility to lead.

As AI capabilities continue to expand, there is an increasing imperative for transparency among organizations, educators, policymakers, consultants, and business leaders. We must understand:

- where our systems are faltering

- what operational constraints we face

- What redesign efforts are essential

- and how we can realistically adapt

It is crucial to recognize that AI functions within established systems.

It magnifies the existing operational conditions and highlights their importance.

As this capability evolves rapidly, the real question becomes whether our leadership frameworks, governance structures, and institutions can transform swiftly enough to manage these advancements responsibly.

Now is the time for proactive leadership and strategic transformation. Together, we can ensure that our organizations not only adapt but thrive in this new era of technological advancement.

Amina Wilson

WGU.edu